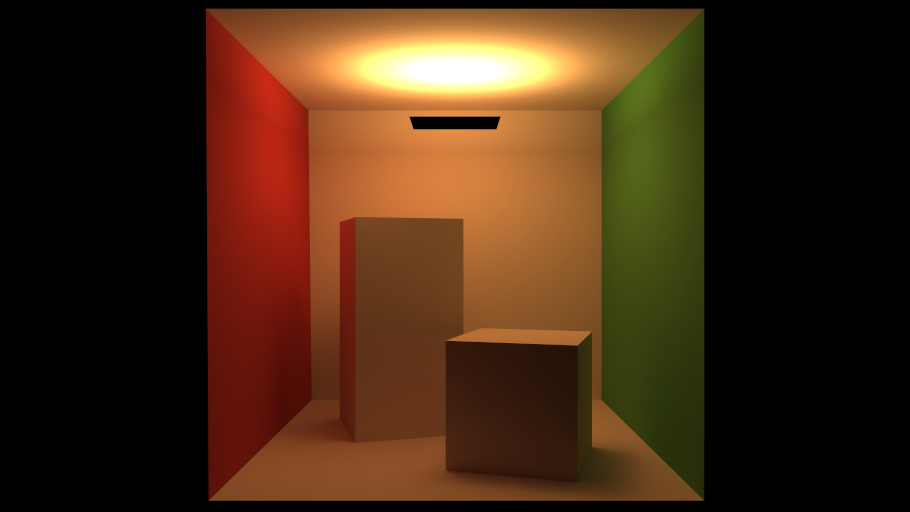

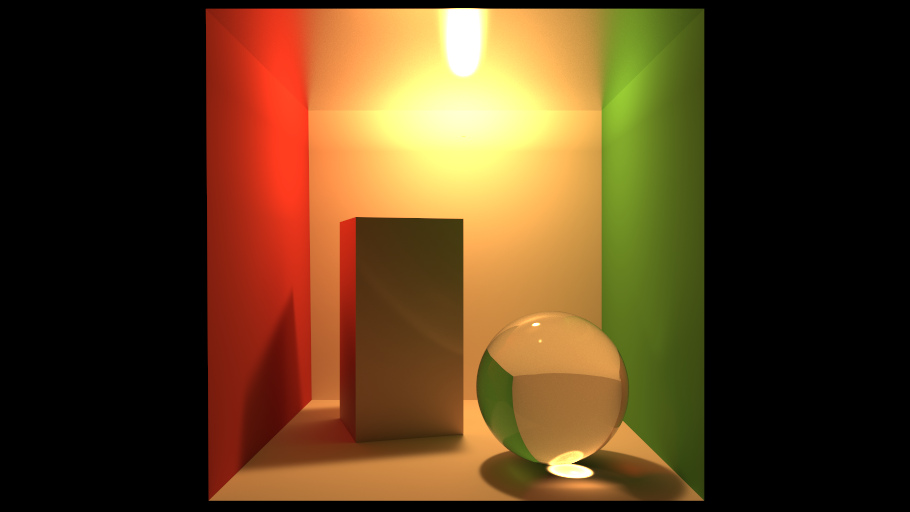

Neural Importance Sampling: Rendering Results

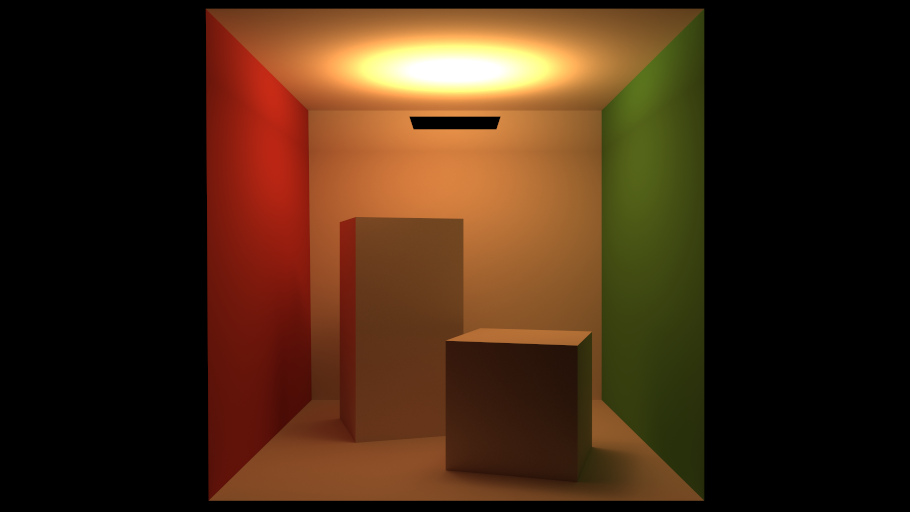

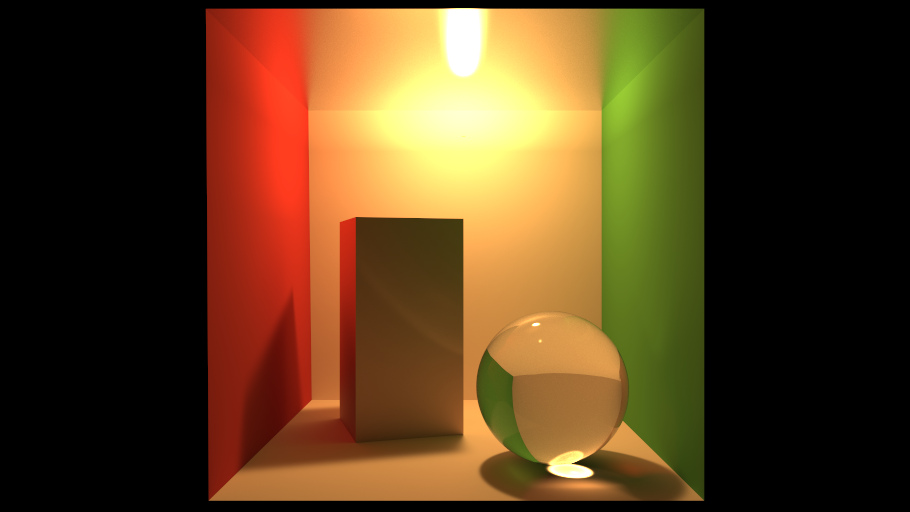

We propose to use deep neural networks for generating samples in Monte Carlo integration. Our work is based on non-linear independent components estimation (NICE), which we extend in numerous ways to improve performance and enable its application to integration problems. First, we introduce piecewise-polynomial coupling transforms that greatly increase the modeling power of individual coupling layers. Second, we propose to preprocess the inputs of neural networks using one-blob encoding, which stimulates localization of computation and improves inference. Third, we derive a gradient-descent-based optimization for the KL and the χ² divergence for the specific application of Monte Carlo integration with unnormalized stochastic estimates of the target distribution. Our approach enables fast and accurate inference and efficient sample generation independently of the dimensionality of the integration domain. We show its benefits on generating natural images and in two applications to light-transport simulation: first, we demonstrate learning of joint path-sampling densities in the primary sample space and importance sampling of multi-dimensional path prefixes thereof. Second, we use our technique to extract conditional directional densities driven by the triple product of the rendering equation and leverage them for path guiding. In all applications, our approach yields on-par or higher performance than competing techniques at equal sample count.

Evaluation

To assess the quality of our method in the context of Monte Carlo light-transport simulation, we evaluate it in an equal-sample-count and an equal-time setting on 16 scenes. The equal-sample-count comparison features unidirectional path tracing, PSSPS [Guo et al. 2018], GMM-based path guiding [Vorba et al. 2014], PPG [Müller et al. [2017], and various flavors of our NPS and NPG techniques. In the equal-time setting, we compare unidirectional path tracing against PPG [Müller et al. [2017] and our product-based NPG.

Equal Sample Count:

Equal Time: